[This is the second post in a series on Dan Davies’ book The Unaccountability Machine. See also Part 1, Part 3 and Part 4.]

I was surprised to learn today that March 31 was the 25th anniversary of the release of The Matrix. I was living in Norfolk, VA at the time, and I only vaguely recall seeing it in the theater. A lot of the themes, not to mention the special effects, from that movie have become commonplace, but at the time, it was visionary. There had been a few other movies in the (cyberpunk) genre (Blade Runner (1982) was an obvious precursor, though not a commercial success.), but The Matrix had a profound effect on the industry.

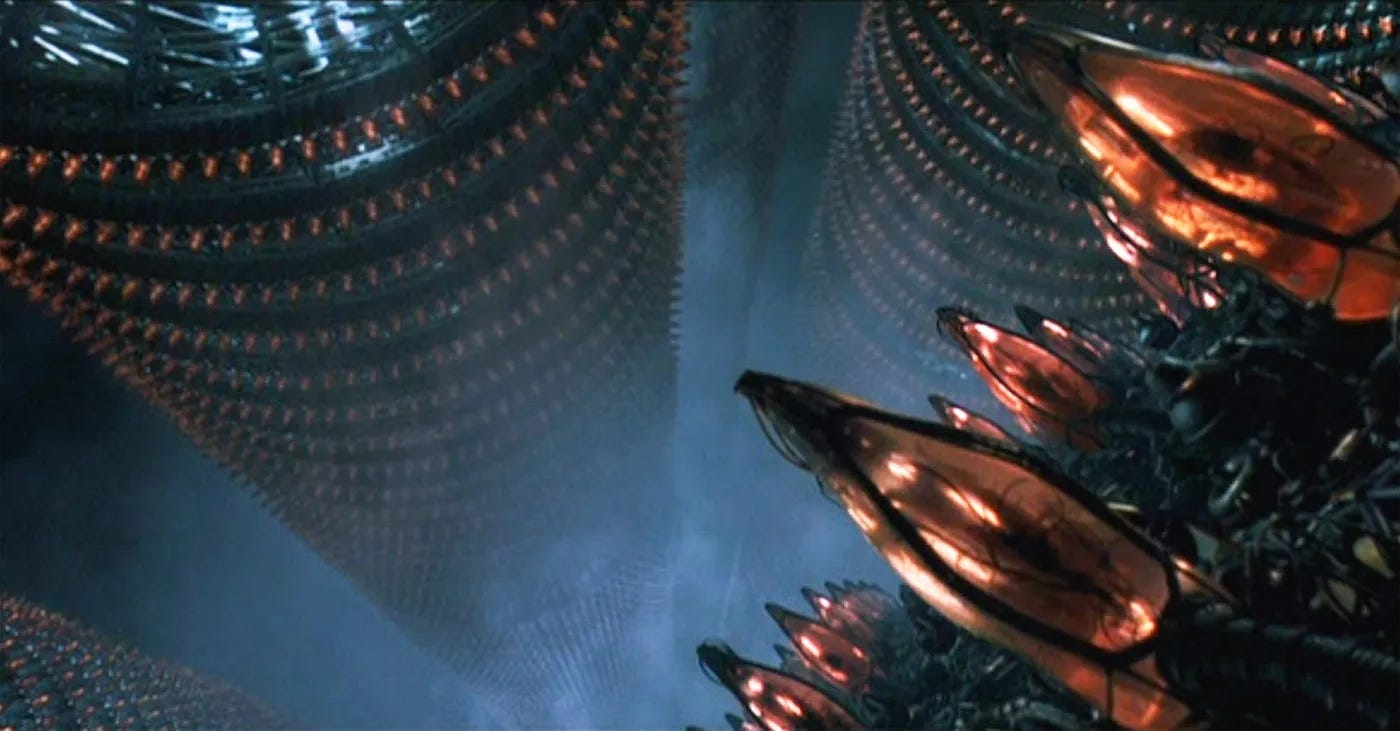

There’s a sublime moment in the movie1 where (spoiler alert?) we discover that the “reality” of the movie up to that point is in fact the matrix itself, a virtual reality, that is being powered by massive towers of human batteries

Cinematically, it’s kind of like the closing scene of Raiders of the Lost Ark, but with the chilling realization that we’re the ones inside the boxes, the raw material whose only function is to fuel the matrix. When you think about it, that fear, that we will eventually become the victims of our own creations, is a pretty frequent theme in science fiction, from Frankenstein to Terminator (Skynet!) to Jurassic Park to Avengers: Age of Ultron, with many stops in between. Now that I think of it, seeing villains succumb to the horrors for which they are responsible is a brand of catharsis that spans any number of genres.

Small wonder, then, that one of the scenarios that we’ve been using culturally to consider the wisdom and impact of AI is the “paperclip maximizer.” Originally credited to philosopher Nick Bostrom, it’s a thought experiment about the potential for AI to go haywire:

Suppose we have an AI whose only goal is to make as many paper clips as possible. The AI will realize quickly that it would be much better if there were no humans because humans might decide to switch it off. Because if humans do so, there would be fewer paper clips. Also, human bodies contain a lot of atoms that could be made into paper clips. The future that the AI would be trying to gear towards would be one in which there were a lot of paper clips but no humans.

There are lots of variations on this scenario (including one where the AI helps us achieve interstellar travel so that we can harvest raw materials from other planets to turn into paper clips) but you probably get the idea. Even relatively innocuous directives might, in the purview of a general artificial intelligence (or Superintelligence, as Bostrom terms it), lead to the decision that humans present an obstacle that should be removed.

Davies has a section on paperclip maximizers in The Unaccountability Machine early on, because the parallels with economic management are pretty obvious. The monomania of optimization isn’t just a thought experiment or a science fiction trope. “This is not just true of ‘artificial intelligences’ in the sense of computer programs, it’s a problem that could potentially happen to any sort of decision-making system that’s complicated enough to need to be treated as a black box.”

It’s difficult to summarize the final portion of Davies’ book without simply reprinting it here—he covers a great deal of ground and does it really well. But perhaps the most important bit of it is the way he explains that this “problem” has been well underway for a long time, economically. It’s perfectly natural for us humans to manage multiple goals, short-term and long-term, in our heads simultaneously, and to shift our behavior depending on circumstance. I’m using time this afternoon to type this paragraph that could just as easily be spent watching a movie, cooking a meal, grading student essays, paring down my inbox, or cleaning my house. On an ongoing basis, the urgency and necessity of all that we could do becomes part of the calculus by which we figure out what we will do. Broader decision-making systems need to be much simpler, though.

So it seems completely normal, in the context of a business, to elevate profit to a top-level goal, and to design around that prime directive. Our commonsense notion of free markets holds that a group of such self-interested organizations, in competition with one another, will produce reasonably accessible goods and services, encourage innovation, and improve society in the process. For a long time, that’s what “managers” were in charge of accomplishing. “In the era of capitalism that J. K. Galbraith and Herbert Simon described, the giants of corporate America were content with being big and important; they didn’t feel the need to get every last bit of cash that could possibly be extracted.” And that dated understanding of free markets is still the same one that gets trotted out these days (and compared to which, anything else is accused of being socialism) to excuse the economic abuse we suffer today.

One of the shifts that Davies describes is “the capitalist counter-revolution against the managerial class,” the outsourcing of management to consultants, who function as accountability sinks to help expel anything that isn’t directly related to profit maximization. (Higher education is going through this right now.) And not just optimization, but optimization NOW. “The decision-making system of a modern corporation has a particular dysfunction: one of its signals has been so amplified that it drowns out the others.” That classic understanding of free markets is one of the things that economics has sacrificed to the amplified growth-and-profit-at-all-costs signal that drives these systems. The idea that you might hold some institutional loyalty to your own employees seems quaint when you consider how many of the most profitable companies in the world continue to invest hundreds of billions in stock buybacks while laying off employees. Optimization explains why so much of our most recent dance with inflation was driven by corporate greed. And our political system, which was once in a place to check that impulse, has been eroded in places and captured in others, to the degree that it functions instead as a line of defense to keep anything from interfering with the pursuit of wealth. “Two decades into the twenty-first century, it’s easy to think of the crazy ideology of profit maximisation as inescapable.”

Private equity is the velociraptor of this particular ecosystem. If at first, it functioned like a forest fire creating space for new growth, injecting some disruption and new opportunities into metastasized markets, its purpose has shifted. “The ‘private equity’ industry, as the leveraged buyout financiers liked to be called, was an extremely efficient predator–compared to all the other non-human decision-making systems, it was much less slow-moving and very aggressive.” This style of finance began in what Davies describes as a “target-rich environment,” companies where the worst excesses of managerialism had taken root. But private equity, once it’d hammered all of the obvious nails, started looking for other opportunities and seeing nails wherever it set its sights. I’ve written before about a couple such targets, but there are many others: the social value of journalism and media far exceeds its profitability, unfortunately, and so we’re starting to see piles of paperclips where once we had journalism. (We’ve already lost most of the retail businesses that folks of my generation grew up with, but those piles seem even more sinister when they replace, say, hospitals and assisted living facilities.)

Although greed and profit are certainly bad enough, it’s the laser focus on optimization that’s the real issue. And a big part of that is how efficient our tools (technological, legal, political) have become in support of that single-mindedness.2 Davies doesn’t suggest that we abandon these processes, or necessarily go back to an earlier age, but we have to figure out how to balance short-term profiteering against long-term sustainability. “This is the paradigm shift that might be required–that organisations and systems can be like people, having purposes without a single goal.” Right now, those other purposes are largely smokescreen3 to disguise their monomania. But they also include things like treating employees fairly, conducting business in an environmentally responsible fashion, developing positive reputations and/or customer loyalty. goals that might benefit the system (and the rest of us) in the long run, even if it comes at the immediate cost of smaller shareholder dividends.

I am not, as I’ve admitted before, an economist, but Davies’ book is clear enough to persuade me that there are alternatives. One of the first steps, I think, involves facing up to the fact that we’re the ones inside those (spreadsheet) boxes, and we can revise the terms of service that put us there. Davies writes that “Businesses ought to be like artists, not paperclip maximisers,” and there’s enough to his analysis to make me believe that this might be possible.

I’ve got at least one or two more posts in me about Davies, I think. There’s still a lot that I’ve left out, in the interest of brevity. More soon.

I’ll be honest: even just writing about it makes me want to go back and watch the film so I can timestamp this reference.

I happened across Freddie DeBoer’s recent piece on optimization, which touches on everything from resale markets to sports to dating apps to tourism. AI-facilitated optimization is already blanketing our culture. “{W]e’ve opened a Pandora’s box,” he writes, “by providing the world with immense amounts of easily-accessed information, and so of course we have many ambitious people doing everything they can to exploit it - often to the detriment of the rest of us.”

Just in case you wondered, this is a place where my own interests in irony emerge. Davies talks a bit about Stafford Beer’s acronym POSIWID—the purpose of a system is what it does—which we tend to ignore in favor of what a company says it does.