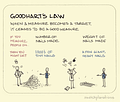

This is a post that’s been brewing for a while now, and it collects a couple of recent items alongside one that’s been on-and-off in my deep archives for many years. It was prompted by a thread I saw about cognitive fallacies (sadly, I forgot to bookmark it). The best thing about that list was that each of the fallacies was accompanied by an illustration from the excellent Sketchplanations, and thus I present to you Goodhart’s Law.

If you want to dig into the history of Goodhart’s Law and its variations, the Wikipedia page on the phenomenon is pretty solid. The weird thing about the Law for me is that there have been a number of times over the years where I’ve found it useful, and assumed that I would subsequently remember its name. But then I didn’t. I’ve probably had to google it ten or twelve times stretched across a couple of decades. There’s a corollary to the “fool me twice, shame on me” adage that could probably be named after my unwillingness to write things like this down. (Hopefully, this post will do the trick for me.)

Anyway, though, Goodhart’s Law is a pattern of behavior that we can find across just about every field of endeavor, and perhaps more to the point of my substack, it’s another great example of how scale can induce deception or distortion. The explanation above is pretty concise, so I want to tease it out just a bit. Charles Goodhart himself was an economist and was talking about monetary policy, but the law itself applies to any circumstance where we’re thinking about any sort of success. The question becomes how we measure that success, and that’s where synecdoche comes into it.

Synecdoche is the trope that connects part to whole; any measure of success is necessarily a narrower representation of it. Here’s an obvious example: if you want to know that a student has done well in a course that I’ve taught, you could hang out with that student for the semester, watch as they complete all the work, talk to them, attend the course meetings, etc., and by the end of it, you’d have a pretty good sense of how they’d done. Or you could just check their transcript, see that they received an A-, and arrive at the same conclusion for a lot less energy and effort. Want to know how they did all four years? That’s what a GPA is for. Want to know how that GPA compares to someone else’s? Universities and programs are ranked annually by a number of different sources, each of which have their own measures to determine institutional quality. Our lives are rife with synecdochic indications of the things we’ve done. So far so good.

Goodhart’s Law intervenes at the point where those measures themselves become “targets.” If your goal is to get into a graduate program, and you know that they expect a minimum GPA, then it may be more strategic for you to take courses that you know will be easier, so that you can get better grades, and raise your GPA. In the long run, you aren’t preparing yourself as well as you could for graduate school, but the very best preparation in the world would mean nothing if you don’t get in. In such a case, your GPA might not be entirely meaningless, but it’s certainly on the way. We see the same thing happen on sites like Goodreads (ahem), Amazon, IMDb, Rotten Tomatoes, et al., in the form of review bombing, both positive and negative. A global rating or judgment can be manipulated in various ways, making it less and less accurate (or valuable).

So if establishing a metric is the first stage in this process, and GL applies where that measure declines rapidly in value, there’s an intermediate step here, one that the law leaves out. And that’s the place where someone begins to game the measure/system. The sketch above provides a couple of nice examples, and the idea of taking a few “easy As” to pump one’s GPA is another. In health care, we might liken this to treating the symptom rather than the underlying problem—the way we might pop a couple of ibuprofen for a stress headache rather than removing ourselves from the situation that’s causing the stress. This isn’t an intrinsically bad thing—there are plenty of things we do that are short-term tactics that make sense in the moment, like drinking an extra cup of coffee because we haven’t slept well the night before. But we’re still gaming the system (ie, substituting proximal (metonymic) strategies for long-term (synecdochic) stability, if you want to read this in terms of my own project.).

If you wanted to write a book(s)-length study of Goodhart’s Law, one of the best places to look would be the history of search engines, and search engine optimization (SEO). Search engines are quite literally shortcut machines—they “read” the web in order to provide us with accurate responses to our queries, and their value (to us) consists almost entirely of their ability to generate “success.” In order to do that, they have to have some way of measuring and ranking pages. I don’t want to go too deep into the various means by which search engines operate, but the arms race between those developers and the folks trying to game their systems for an advantage (and the subsequent monopolization of search) has had a massive impact upon our quality of online life. It’s also one of the contexts where Goodhart’s Law has come up for me in my own research.

But the piece that prompted my thoughts today is an essay from HouseFresh, a company that tests, reviews, and rates products related to indoor air quality. The essay is called “How Google is killing independent sites like ours,” and trust me when I say that it’s worth your time to read it in full. I’ve written here before about how private equity firms have sucked up much of the traditional media that I grew up with, gutted it, and then slapped its brand on shit, but the good folks at HouseFresh bring the receipts. They show how a host of once-reputable brands—like Better Homes and Gardens, Popular Science, Rolling Stone, Real Simple, Buzzfeed, and Forbes—are now just sludge factories for generating traffic, capitalizing on brand recognition to crowd out genuinely useful information. It’s a shocking read, to be honest. And if these sites were simply recycling others’ work, that might be one thing, but this article demonstrates the degree to which these sites are actively doing harm:

For example, Better Homes & Gardens recommends the Molekule Air Mini+ as their best option for small rooms…We have no idea how this device made the list considering that Molekule recently filed for bankruptcy, has active class action lawsuits for false advertising, has been recognized by Wirecutter as the worst air purifier they tested, and received the honor of being labeled as “not living up to the hype” by Consumer Reports.

Wait, because there is some idea about how a crappy device from a company that no longer exists might have made the list (“it comes with a juicy commission compared to other better quality yet budget products.”). Housefresh provides example after example of all of the shortcuts in play here—from copy-pasting amazon reviews to seeding their content on Reddit with fake accounts to using internal stock photos across multiple “brands” owned by the same equity firm—all of which add up to a massive Goodhart network of fraud, one that’s incentivized by the measures that Google has in place to adjudicate a site’s value.

If a magazine they trust tells them the Molekule Air Mini+, the PuroAir HEPA 14 240, and the Okaysou AirMax 10L Pro will help with their pet allergies, their asthma flare-ups, the air pollution that gets through the windows, the wildfire smoke blowing in their direction, the mold spores in their damp apartment, or recurring flu outbreaks in their school, then they’ll go and buy one of those useless, overpriced units.

Everybody loses but the investment firm.

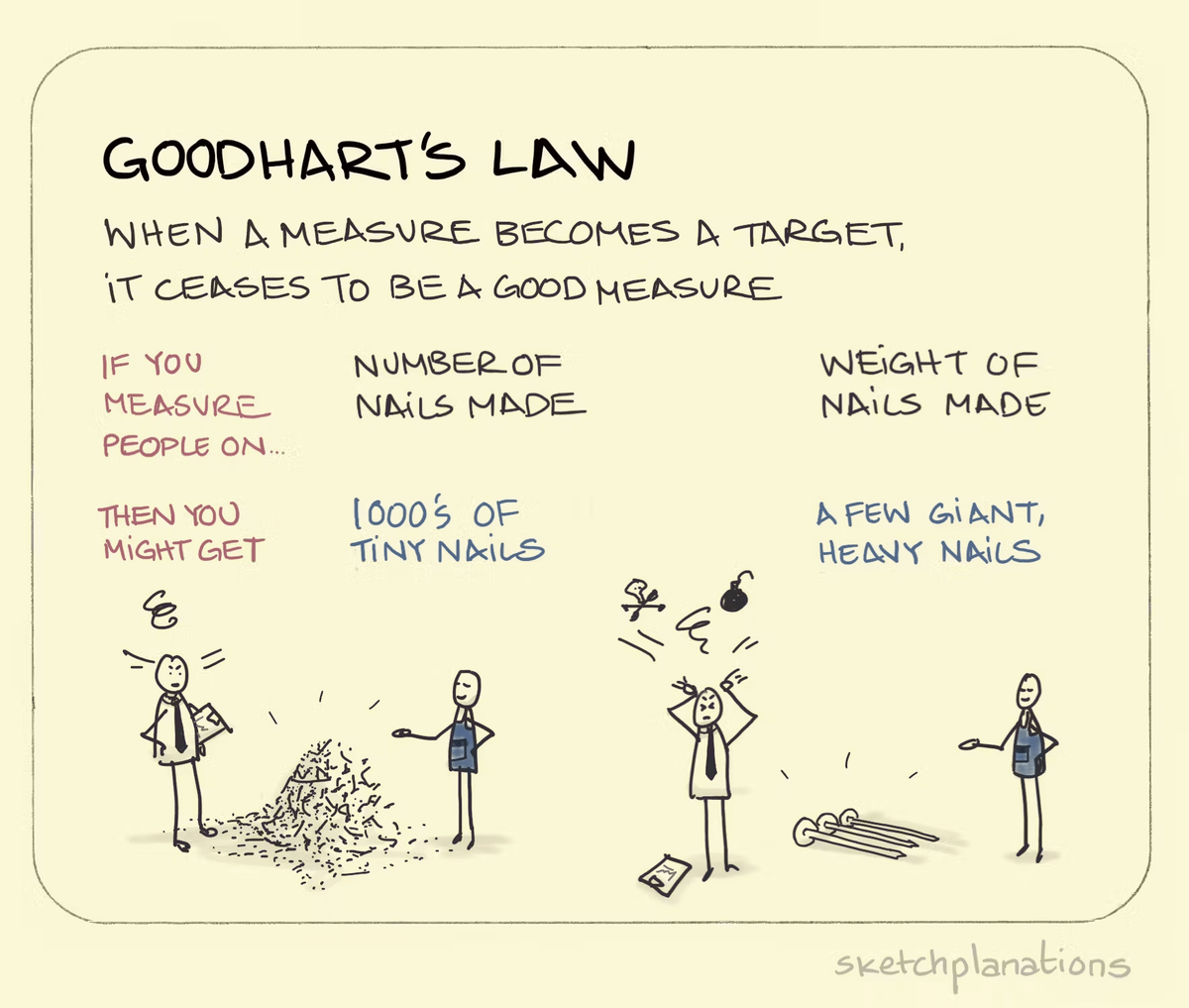

When I was reading Filterworld reviews, I didn’t really have the space or the context to include a particularly salient point from Kevin Munger, but I’ll share it here. Part of the problem with blaming “the algorithm” for all of this is that there’s a Goodhart subtext to algorithms. Any sort of system (digital, organizational, human) beyond a certain size relies on algorithms. But the key issue is how those algorithms interact with the metrics we use to measure success. And so, according to Munger, the real issue with social media was “the switch away from metrics of recommendation quality in favor of ‘captivation metrics’ like the famous “time spent on site.” Which is the audience metric that maximizes profits.”

In the context of HouseFresh’s complaint, none of these other sites (or the firms that own their names/brands now) could give two shits about whether their recommendations are useful or whether they might endanger the lives of the people who take them on good faith. They care only about the traffic they’re able to generate, because that traffic is the product that they sell to advertisers. They’re free to lie outright to us so long as those lies look enough like the truth to fool Google metrics.

[If this last line resonates a little, that may be because it echoes the problem that many people experience with genAI and LLM hallucinations. Whether or not a source is real has no bearing on its ability to adhere to the pattern of sourcework that the LLM has been trained on. In other words, AI (and AI “search”) will only make the problem I’m describing here worse—it’ll make it that much faster to turn measures into targets. Like Amazon manipulating prices in real-time to bankrupt competitors, there’s no reason to imagine that real-time SEO manipulation isn’t already here.]

Munger cites (and recommends) Nick Weaver’s essay “Captivating algorithms: Recommender systems as traps,” It describes, in part, the shift away from recommender systems as discovery tools for users to “persuasive technologies” whose goal it is to capture us. As Mike, a pseudonymous engineer for a “personalized radio company” explained, his job (early on) was to refine algorithms to provide users with a better experience. Now? “I’m just trying to get you hooked.” Weaver explains how “persuasive technologies work in the blurry middle” between “between coercion, figured as material or technological, and persuasion, figured as mental or cultural.” I could write an entire post on Weaver’s essay—it’s a really engaging read—but I want to bridge from the “captology” of these systems to one more article that drew some attention this week, Ted Gioia’s “State of the Culture, 2024.”

It’s a grim look at things, one that feels more overwhelming the more I consider it. Gioia writes that “it’s a bigger issue than just struggling artists or floundering media companies,” and I’m inclined to agree. Rob Horning critiques the behaviorism of this diagnosis, but honestly, having just read Weaver (and having engaged with some of the “persuasive technologies” work in the past), I’m not quite sure where I fall. I do agree with Horning’s claim that describing this in terms of “dopamine,” which euphemizes the entire ecology of factors, is not the best strategy. “Dopamine is a nonexplanation that renames the facts of social predation and exploitation and naturalizes them, no matter how much we might complain about it.” To put it in language I’ve used here before, to describe it as dopamine runs a very real risk of mythologizing these broader forces, right when we should be looking at them more closely.

I know that this is a bit of a shrug ending, and I ended up a lot more complicated than I started, but Goodhart’s Law provides a really interesting pathway into a lot of the deeper questions that I’m trying to ask and answer with my project, so I’m sure I’ll return to pieces of this post in the future. See you later.

(In the meantime, if you’d like to consider an inverted example of Goodhart’s Law, look no further than the (staggering absence of) Supreme Court ethics that John Oliver tackled last week. The “polite fiction” of the “personal hospitality” exemption to gift reporting is a great example of a measure that got distorted and exploited beyond any reasonable interpretation to include all of the alleged corruption that Clarence Thomas has engaged in.)

Are you familiar with C. Thi Nguyen's concept of value capture? It echoes your analysis in this post, and also relates to some of the other writing you've been doing around content as capital, influence, and the pump & dump bestseller list schemes.

https://philpapers.org/rec/NGUVCH

In Terry Gilliam's 'Brazil', Sam's dining companions choose entres from beautiful 'Art Culinaire' menu images and are served three lumpy green scoops of glop; in modernist Amazonia our fellow consumers choose the five-star anti-fungal cream that's procured for just us, popped up on our screen, and we get three tubes of green lumpy glop. And we get it in two days. Progress. I had no idea how insidious the system's become. And how completely shittified; but I burned my 'membership' down eight years ago.